Fix Media Recording Issues Across All Browsers - Hexus

.png)

At Hexus, we are reimagining how go-to-market (GTM) teams create product content with AI. We are a centralized platform to build multi-modal interactive demos, how-to-guides, and product updates for driving product-led-growth. We make it easy to record, edit, personalize, and serve product collateral in minutes while providing advanced analytics and lead generation capabilities.

While there are a ton of interesting challenges and unsolved problems in AI—such as RAG vs. prompt engineering, measuring quality, and building guardrails (more on those in later posts)—we did not anticipate that a simple media recording would be a not-so-straightforward problem. As it turns out, resolving media recording issues became an essential part of building a seamless experience.

This allows teams to:

• record in-browser product walkthroughs

• Edit and customize multimedia demos on the spot

• Instantly produce multi-format product content

• Distribute with deep analytics and lead generation hooks

The advanced AI challenges that kept us occupied in the last two years—like Retrieval-Augmented Generation (RAG), automated quality checks, and strong guardrails—were fun to build, yet an unexpected roadblock caught us by surprise: building a seamless in-browser voice and video recording experience. What looked deceptively easy from the outside ended up becoming an incredibly complex engineering journey.

This blog is a fascinating walkthrough of our technical journey toward building a browser media recording and playback system that works: flawlessly and FAST, across browsers, platforms, and file formats.

Recording Voice-Over & Videos

At a high level, you simply record your product with Hexus – we turn those recordings into a product guide with multiple formats: interactive tour, audio, video, how-to-guides, blogs and AI search index for your help center, ready to serve within minutes.

As we continue to evolve Hexus, we're also adding more features. One of the top requests was for recording videos and audio on interactive demos. This gives creators more control over how they showcase their products to viewers, while also resolving media recording issues that previously limited flexibility.

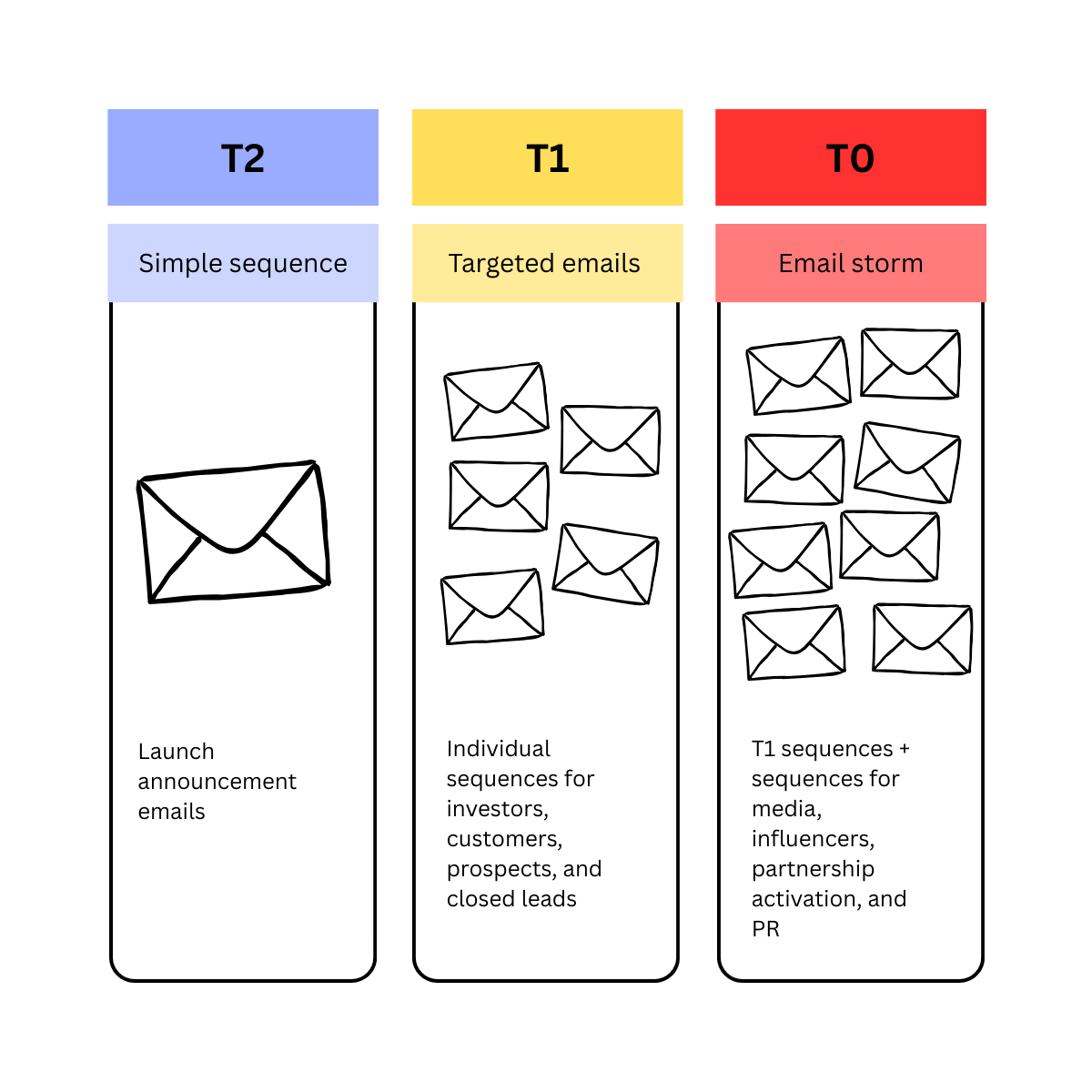

To support the recorded media uploads on Hexus demos, we laid out a few features and requirements for ourselves, to provide maximal quality and control:

- In-browser recording - with UX as our prio, we wanted to support in-app recording of audio and video, no need to jump back and forth with third party tools

- Media editing capabilities - in-app editing to help our creators do their best work and customize it to their requirements

- Cross-browser compatibility - Hexus demos work across all web browsers, flawlessly

- Performance - a critical one, many of our demos are embedded on customer websites and landing pages, it’s important to load and serve the demos blazing fast for viewers

Problem: Recording with Browser’s Native MediaRecorder

We started off with implementing MediaRecorder API in web browser to record the audio and video, upload to our backend storage servers, linking them to Hexus demos and finally serving up the media links when a Hexus demo is viewed in browser.

Initially, this seemed like a straightforward approach that would work seamlessly. (Eng estimated 4 hours max!). However, we soon realized that recording and serving media in browsers remains quite complex across major browsers.

Majority of our creators use Google Chrome to record their demos which uses video/webm and audio/webm for the media chunks recorded. These formats simply don’t play out of the box on Safari - the browser used by a significant number of end users watching Hexus demos on their phones!

Google Chrome bug open for 8 years now, for improving media recording formats [link]

Another problem we ran into was video/webm and audio/webm formatted files recorded on Chrome did not contain rich metadata like media duration, seekable file format and low bitrate compression that’d help us support fast loading in browser and advanced features like jumping to a specific point in video for editing.

Search for Solutions

A bit of research (ChatGPT + StackOverflow + Chrome Bug tracker + Webkit bugs ) set us on the path to encode video and audio files in video/mp4 and audio/mp3 formats - performant and supported on all browsers, that’d provide a great viewing experience for end users as well as easy editing capabilities for creators.

So far so good, or so we thought, this should be easy, to use an open source library, encode videos to video/mp4 and audio to audio/mp3 formats before storing on backend - that’d help us fix multiple issues in one go!

Initially we implemented this using the open sourced library ffmpeg-wasm (WebAssembly + javascript) to encode the media in browser, before uploading to browsers. While this worked well, it had some of it’s own issues:

- Media encoding is resource intensive, longer the media recording the more CPU time and memory it takes to encode before uploading. This led to a poor experience for our creators, who’d now have to wait several seconds or minutes for their recordings to be encoded before they could move on to next steps. It did not scale well for recordings longer than a few seconds!

- It also ran the risk of browser side crashes where we don’t have any control over CPU and memory resources available for ffmpeg-wasm to use.

- Setting up ffmpeg-wasm to be performant in browsers was also challenging as it required supporting cross origin isolation to use multi-threaded encoding and testing constantly across all browsers.

Now that we knew what needed to be done, our only challenge that remained was to make it fast and reliable. So we took the media encoding to server side where we had more control:

- We could provision as much CPU and memory resources on server side as needed to encode large media files, within few seconds reliably.

- We could do this asynchronously, without making our creators wait during creation and edit demos, for smooth user experience.

A Server-Side Approach

We went ahead with the following approach:

- We setup a AWS Lambda serverless function with FFMPEG executable to download media files from S3 (browser recorded), encode to video/mp4 and audio/mp3 formats for video and audio respectively and upload back to S3 storage - ready for serving across all browsers.

- When browser recorded audio or video files are uploaded to our servers, the AWS lambda function is asynchronously triggered to encode and replace the media files in place.

Hexus media encoding/serving architecture

Using AWS lambda framework on our backend fit our use case for multiple reason:

- It’s easy to provision memory and compute for fast media conversion workload which suits our requirement.

- AWS lambda works well with S3 storage, allowing fast downloads and uploads of media files, without high network latency or costs incurred.

- AWS lambda allows upto 15 minutes of CPU time for one execution which is way more than sufficient for converting even the largest of media files of several GB we’ve observed.

- It supports on demand scaling i.e concurrent AWS lambda instances are provisioned without extra effort, thus able to support large number of uploads at the same time.

Takeaways

Working on media encoding pipeline for Hexus we took away some learnings:

Media formats and standards across browsers are highly fragmented - thanks to browser wars from Apple & Google. The burden is on devs to make sure they can record and serve media across platforms for compatibility.

The current ecosystem largely depends on open source solutions to make media encoding and playback cross browser compatible, because for one

While implementing your own media encoding and serving pipeline prioritize a solution based on:

Media creator experience: easy and fast media recording/uploads with no extra steps

Media consumer experience: cross browser compatibility, because you never know what browser your users might end up using!

Performance & Scale of your system: Whatever solution you end up choosing, browser or server side encoding, it should be easier for your engineering team to maintain and update with growing use cases. Optimize for a greater degree of control.

As we continue to refine the quality, develop new features, and optimize the pipeline for speed and cost, we may decide to look out for more solutions, batch operations and dedicated servers. Or maybe Chrome and Safari will fix adding the correct metadata in the recordings for us.

If you want to join our engineering team, check out our careers page. Bonus points if you have ideas to simplify the solution.

Join Our Team

If you are excited about solving deep media infrastructure problems while building tools for GTM teams, drop us a line. See our careers page.

Bonus points if you come up with tricks to speed up video encoding, improve playback performance, or contribute to resolving media recording issues to make this pipeline even simpler.

Let's develop the future of product storytelling together.

Connect with Hexus.ai today!

.png)

.png)